Google Uses Different Algorithms for Different Languages (with International SEO Guide)

Table of Contents (Click to show/hide)

Different Algorithms For Multilingual SEO?

According to Google's Search Advocate John Mueller, the world's largest search engine uses identical search engine algorithms across most languages on the planet.

In a Reddit thread regarding best search engine optimisation practices, an anonymous user stated that the most recent BERT update dealing with semantics caused them to start wondering whether or not content written in different languages would be weighted differently because of their structural and vocabulary differences.

The user asked the Reddit community if any of them had come across differences between unique ranking factors according to other languages they might have worked in, and that's when Jon Mueller stepped in and answered that there's no overarching algorithm at Google – but instead a number of algorithms all handling their own heavy lifting.

Some of these algorithms, according to Mueller, are responsible for searches in all languages. Others, though, are language-specific.

The example given by Mueller was that certain languages don't separate individual words with spaces between them when they are written. This makes it very challenging for Google to break down using "traditional" algorithms.

Here's Mueller in his own words:

"Search uses lots, lots of algorithms. Some of them apply to content in all languages, some of them are specific to individual languages (for example, some languages don't use spaces to separate words — which would make things kinda hard to search for if Google assumed that all languages were like English)."

Is Google Using Different Algorithms for Different Languages?

The main takeaway here, of course, is that the Google Search algorithm is not some sort of monolith.

While the same algorithms scan most pieces of content, some systems have been written to work exclusively with specific languages (particularly when it has to do with content structure).

In a recent Google Search Central SEO Hangout, Mueller went on to add a little more information about how Google handles these kinds of issues.

Mueller focused pretty heavily on talking up the hreflang HTML tag and the critical role it plays in helping to identify which algorithm should be used by Google to scan the content that has been posted online.

Miller said:

"… we basically use the hreflang to understand which of these URLs are equivalent from your point of view. I think it's impossible for us to understand that this specific content is equivalent for another country or another language. Like, there are so many local differences that are always possible."

How To Deal With Website With Multiple Languages For SEO?

First thing first, Google has confirmed that it can identify the primary language in a page with mixed languages. And it's OK to have multiple languages on one page. Google will still try to find the best-related content in your page to the correct language user. But if you confuse Google, that's on you.

Second, in Google's multi-regional and multilingual SEO guideline, Google recommends using different URLs for each language version of a page rather than using cookies or browser settings to adjust the content language on the page.

If you use different URLs for different languages, use hreflang annotations to help Google search results link to the correct language version of a page.

What is the "Hreflang" Tag?

First unveiled to the world in December 2011 by the folks at Google, this HTML tag is an attribute that allows search engine spiders and algorithms to determine what language was used to create the content on that specific page.

This is particularly helpful for search engines to identify content relevancy. For example, when the "Español" tag has been attached using hreflang="es" Google knows that the content was written in Spanish.

On top of that, Google knows that the content is likely to be more relevant for Spanish-speaking searchers as well.

Choosing Target Countries & Strategising Site Structure

When planning your online international expansion and deciding on target markets, you also need to consider how you’re going to target them.

There are four main ways in which the URL structure can reflect internationalisation:

Using Different ccTLDs

Using different ccTLD domains. This is considered the best practice for targeting Russian and China, in particular. An example of this in practice is one of my clients who specialised period pain relief device - Ovira AU, Ovira UK and Ovira US.

International Subdomains

Using a single domain, typically a gTLD, and using language targeted subdomains. An example of this in practice is , which subdomain to differentiate between the

International Subdirectories

Again using a single domain, typically a gTLD, different language and content zones are targeted through a subdirectory. An example of this in practice is my previous employer Koala Mattress. Or my one of my clients ESSA.CN

Anecdotally speaking, this is often times a favoured approach by developers.

Parameter Appending

I don’t recommend implementing this method as it goes against Google's recommendation, but I do see it a lot. This is where the domain is appended with a ?lang=de parameter or similar.

It’s also worth noting that some third-party tools will flag parameter hreflang as errors, as their own bots don’t recognise the ?parameter strings.

Here is a GREAT comparison table for the options above (Source: Google):

How to Best Use the Hreflang Tag

Hreflang always starts with targeting language but then can consist of further variables such as:

- Language: “en”, “es”, “zh”, or a registered value.

- Script: “Latn”, “Cyrl”, or other ISO 15924 codes.

- Region: ISO 3166 codes, or UN M.49 codes.

- Variant: Such as “guoyu”, “Latn”, “Cyrl.”

- Extension: Single letter followed by additional subtags.

Regardless of how targeted your tags are, they must also follow the below format:

{language}-{extlangtag}-{script}-{region}-{variant}-{extension}

What Are The Best Practice For Locale-Adaptive Pages (Or International SEO)?

Here are a couple of practical tips to help you best use the hreflang tag, especially if you want to ensure that your content isn't getting shuffled to the back of the Google sandbox because search algorithms can't determine what language it was written.

Use different URLs for different language versions

Google recommends using different URLs for each language version of a page rather than using cookies or browser settings to adjust the content language on the page. This is because they are web page-level, not domain level.

Let the user switch the page language

If you have multiple versions of a page like Ovira:

Consider adding hyperlinks to other language versions of a page. That way, users can click to choose a different language version of the page. But avoid automatic redirection based on the user's perceived language. These redirections could prevent users (and search engines) from viewing all the versions of your site.

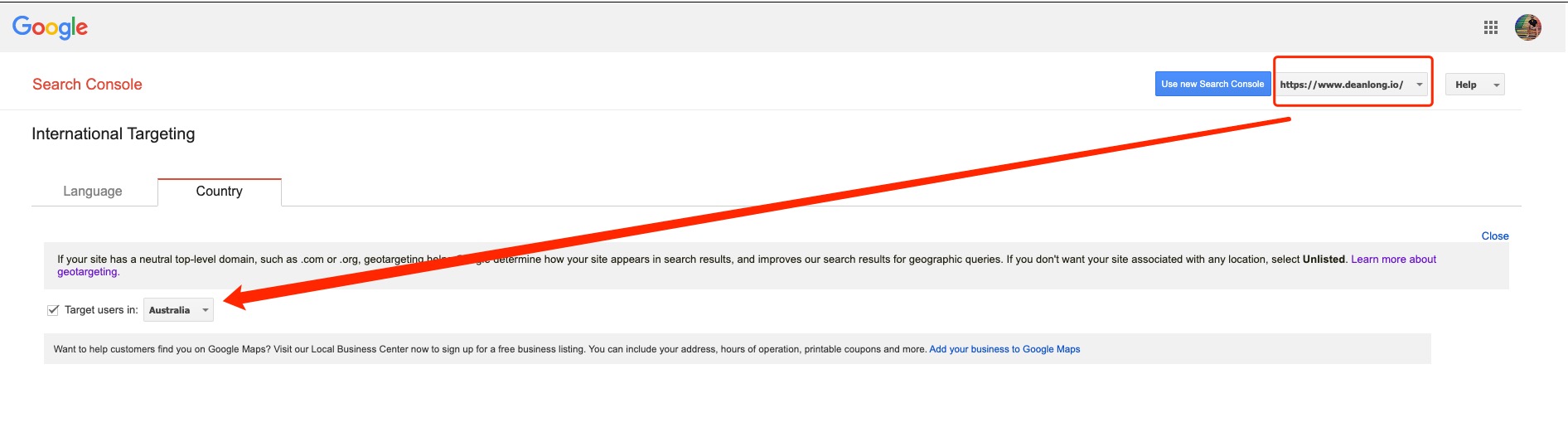

An Extra Tip: Set up Country Targeting if your domain targets certain country

In recent #AskGoogleBot in Google Search Console Youtube channel, John talked about how a web server's geographic location affects SEO. He mentioned that the geo-targeting setting in Google Search Console works as a signal for prefix-level location targeting.

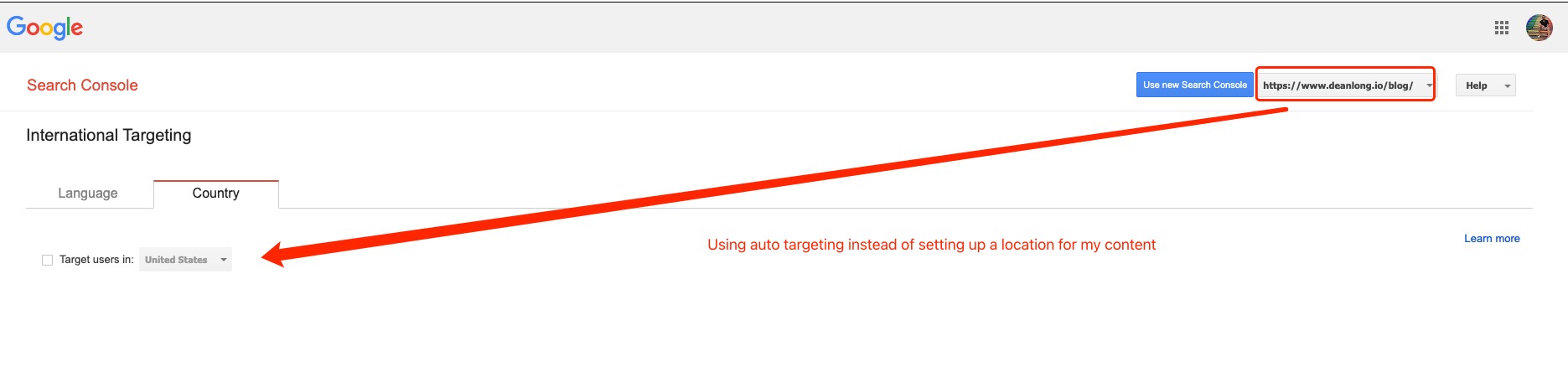

Remember, domain property in GSC cannot set up an International Targeting report. Only prefix/page-level can. This means while Google says the setting is for a "site-wide country target for your entire site, if desired." you can set up different international targeting in various prefix properties.

Take my website, for example, my homepage prefix property is targeting Australia, but my main content, which is /blog subfolders, is targeting America. It might not be the best practice, but I have to admit this works based on the data in my GSC.

Update as of Aug 2022: Google Search Console to remove International Targeting report

Google is deprecating the international targeting report in Google Search Console, the company announced. Google wrote, “The International Targeting report has been deprecated, and will be removed from Search Console soon.”

The report will go away on September 22, 2022.

Keep Google Bots' IP Location In Mind For Localised Pages

According to Google's guideline on implement dynamic rendering Google is perfectly capable of crawling dynamic rendered content. But in this context, businesses often use location-based rendering to show specific localised content to certain visitors. This means the content is dynamic based on visitor's location. A good example can be Linktree's pricing page. If you have pages like this, or similar content strategy, please remember that the default IP addresses of the Googlebot crawler appear to be based in the USA. In addition, the crawler sends HTTP requests without setting Accept-Language in the request header. This means if your site has locale-adaptive pages (that is, your site returns different content based on the perceived country or preferred language of the visitor), Google might not crawl, index, or rank all your content for different locales. That's why google recommend using separate locale URL configurations and annotating them with rel="alternate" hreflang annotations.

Also, if you block USA-based users from accessing your content, but allow visitors from Australia to see it, your server should block a Googlebot that appears to be coming from the USA, but allow access to a Googlebot that appears to come from Australia. (source: 1)

***Extended Reading: Overview of Google crawlers (user agents)***

In addition, please don't use location-based redirect since most of the Google bots are coming from the USA. John Muller has retweeted the following:

Do All Search Engines Support Hreflang?

There haven’t been many developments in Hreflang support over recent years, however, Yandex officially introduced XML sitemap support in August 2020.

The status of other search engines remains the same.

Because of Bing’s market share (which is widely debated) globally, best practice means always including both hreflang and the HTML Meta Language Tag as part of an international technical specification.

<meta http-equiv="content-language" content="en-gb">

Closing Thoughts

At the end of the day, it is crucial to understand that the hreflang tag is a signal and not a directive. What's more, it's even more important to have a brief understanding of Google's journey to create a semantic search engine. This will help you to gain more background knowledge which helps in researching.

This basically means that a whole host of other search engine optimisation factors could end up outweighing this tag, nullifying its effectiveness almost completely.

It's important to use best search engine practices throughout your content alongside this tag. It's not a tag to necessarily help you increase overall traffic, but instead a tag to improve your odds of that content being served to the most relevant searchers.

References

- https://www.searchenginejournal.com/google-uses-different-algorithms-for-different-languages/434227/#close

- https://www.seroundtable.com/google-search-algorithms-languages-32643.html

- https://moz.com/learn/seo/hreflang-tag

- https://www.reddit.com/r/SEO/comments/s64i0e/does_google_use_the_same_algorithm_for_every/

- https://developers.google.com/search/docs/advanced/crawling/locale-adaptive-pages

- https://twitter.com/JohnMu/status/1498611829551112192

- https://developers.google.com/search/docs/advanced/javascript/dynamic-rendering